The 6% Fallacy: Why the ‘Death of SaaS’ is Mathematically Wrong

- February 24, 2026

- Read time: 3 minutes

There is a slide circulating in Silicon Valley boardrooms right now that seems to terrify investors. It plots the marginal cost of generating code against the enterprise value of SaaS companies. The narrative is simple and seductive: AI drives the cost of code generation to zero, therefore the value of the entire software industry must necessarily collapse.

It is a compelling piece of logic. It is also mathematically and operationally wrong. The current bear case for SaaS rests on a fatal error. It assumes customers are paying software vendors just for the syntax. They aren't. They are paying for a service that guarantees a specific business outcome.

When you deconstruct the P&L of a modern SaaS company, it becomes clear that AI won’t replace SaaS. It simply separates the winners who architected for intelligence from the losers who were just selling forms over databases. SaaS isn’t dying. It is becoming the necessary shield between the customer and the complexity of the AI era.

1. The Service Is the Moat.

To understand why SaaS endures, we must split the acronym.

‘Software’ (The Implementation): This is the bits and bytes. The UI, the database schemas, and logic. In an AI world, the act of writing code becomes a commodity.

‘Service’ (The Value): This is what enterprises buy. The Service includes availability (99.99% uptime), security compliance (SOC2, HIPAA), data governance, and customer support.

However, knowing what to write remains scarce. The domain expertise, the intricate workflow logic, and the understanding of the customer's business cannot be automated. AI can lay the bricks, but it still requires an architect with deep industry knowledge to draw the blueprints.

Even if AI writes the code, who wakes up at 3:00AM when the server goes down? Who assumes liability if the data is leaked? Who defines the roadmap when regulations change?

The ‘service’ wrapper around the software is what protects the customer from the chaos of raw code. As code becomes cheaper, the trust provided by the service becomes the premium asset.

2. The Math: Why AI Only Impacts 6% of the P&L.

Let’s look at the hard math. The ‘Death of SaaS’ narrative assumes that code writing is 90% of a company's cost structure. It is not.

While early-stage startups do spend heavily on initial build, the steady state of a profitable SaaS company is a distribution engine, not a coding shop. For mature, efficient SaaS companies, the actual R&D budget is often between 10% and 25% of total revenue. Most costs go to sales, marketing, customer success, hosting, and operations.

Drilling into that R&D budget:

- The ‘Coding’ Fraction: Engineers only spend about 25% of their time writing code. The other 75% is spent on high-value tasks: architectural decisions, domain modeling, and interpreting user needs.

- The Math: 25% (Coding time) x 25% (Total R&D Budget) ≈ 6% Impact.

AI makes the creation of software slightly cheaper, but it doesn't fundamentally change the cost model. It simply frees R&D to focus on the hardest part of software: defining the solution.

However, incumbents cannot get complacent. While the cost savings are minimal, the velocity risk is existential. A challenger won't beat you because they are cheaper. They will beat you because they can innovate significantly faster. The goal of AI isn't to cut the budget; it's to double the shipping speed.

3. The Hidden Cost of Inference: Why AI Establishes an Economic Price Floor.

Here is the point the ‘SaaS is dead’ crowd misses: While one-time development costs might dip, the recurring operating costs will increase structurally. Software does not run on magic; it runs on hardware.

- Old World: Traditional software is Deterministic. It runs on CPUs. It is efficient, cheap, and predictable.

- AI World: AI-native software is Probabilistic. It requires GPUs and massive inference to compute.<

Every time a user interacts with a ‘smart’ system by asking a natural language question or requesting a summary, that request burns significantly more electricity and hardware cycles than a traditional database query.

We are trading a 6% reduction in development costs for an explosion in recurring operational costs.

‘Service’ Becomes the Optimization Layer

Because runtime costs will increase, the role of the SaaS vendor becomes even more critical. They act as an arbiter of efficiency. They must manage a hybrid infrastructure: keeping the Deterministic systems for the 90% of tasks that need to be fast, cheap, and perfect (transactions, storage), and injecting Probabilistic AI only where it adds high-value reasoning.

This hybrid reality exposes the fatal flaw in the disruption narrative.

The prevailing fear is that a solo developer will generate a clone of Salesforce, Workday or ServiceNow in a weekend and sell it for pennies. But to compete in this new era, that clone cannot just be a form over a database; it must be intelligent. It must use AI at runtime.

Even if a challenger generates the code for free, they cannot run it for free. They face a high ‘Cost of Goods Sold’ in the form of inference bills. They cannot undercut the market significantly because they must cover their own expensive CPU and GPU usage.

This creates a hard economic price floor. The narrative that AI will drive a ‘race to the bottom’ in pricing is mathematically flawed. There is no scenario where the total cost of delivering a smart, reasoning system drops. The cost base is increasing, and consequently, the value of the software and its price will likely rise. However, this pricing power is reserved for those who master the hybrid architecture required to keep costs in check.

4. The Deterministic Spine: Blueprint for an AI-Native System.

If the economics are feasible, the question becomes: how do you build for this future? Most people assume an AI-native system is one where an LLM (like Claude or ChatGPT) writes the code on the fly. The narrative suggests that in the future, every action will be a determined by an LLM.

While this spirit of innovation is exciting, it fails the ‘enterprise reality check.

In mission-critical industries (like supply chain or banking), ‘probably right’ is effectively the same as ‘wrong.’ Enterprises cannot afford a production system where an LLM generates different code on Tuesday than it did on Monday.

Real enterprise value lies in reliability, not just creativity. The true ‘AI-native’ system isn't one written by AI, but one architected to be safely orchestrated by AI.

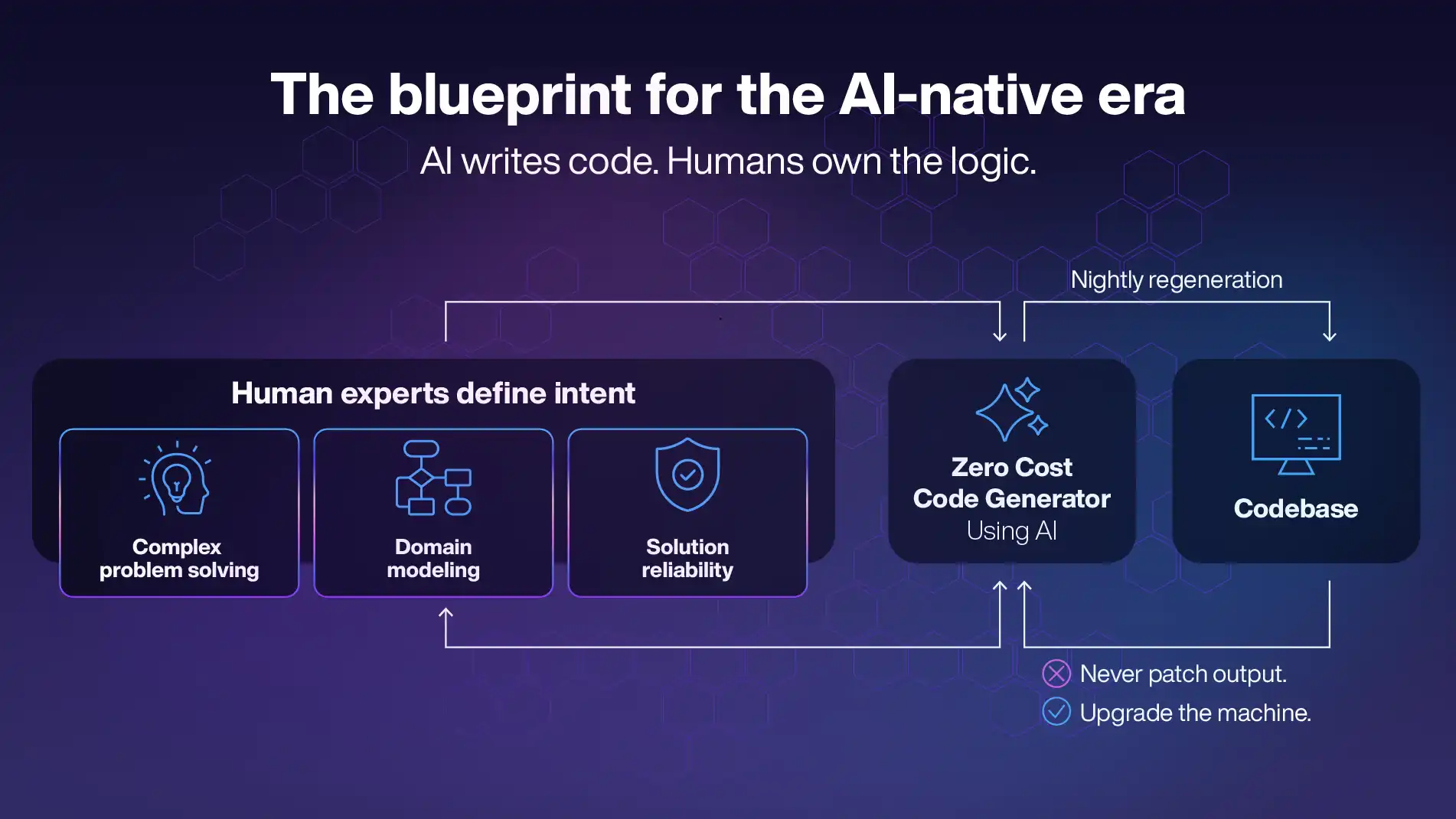

Intent Over Syntax: A Decade of Zero-Cost Code

We don't have to guess what happens to a business model when code generation becomes free. We have had a testing ground for this for a decade.

It’s called Intent-based architecture. This isn’t theoretical; we have been using this model at Manhattan Associates, where we shifted our focus from writing syntax to capturing business intent over a decade ago. The philosophy was simple: humans should define the what (business logic and rules), and machines should generate the how (code).

Consequently, we do not maintain a static legacy codebase. Instead, we regenerate about 75% of more than 60 million lines of code every night based on these intent definitions.

This operational reality offers a critical economic lesson. We have effectively been running a decade-long case study for the ‘zero marginal cost of code’ era. Because most of our code is machine-generated, the ‘cost’ of writing that syntax is already negligible. However, this efficiency did not make our total development costs zero. Instead, it allowed us to reallocate those resources entirely into the ‘Service’ - the complex problem-solving, domain modeling, and reliability that customers actually buy.

This massive nightly regeneration isn't just a vanity metric; it is the ultimate hedge against technical debt. Our system effectively never ages because it is reborn every 24 hours. Because the code is machine-generated from strict logic rules rather than probability, it is deterministic. If the system calculates 2+2, it will always equal 4. There is no hallucination in the business logic. All AI-native systems require a ‘deterministic spine’ - a rigid, reliable core that does exactly what it is told.

This approach also enables another massive advantage - the ability to instantly inject modern capabilities across a massive surface area. For example, when we recently wanted to add natural language – human-readable audits using LLMs – we simply updated the central code generator. Within one build cycle, the capability propagated across the entire portfolio of solutions.

This is the blueprint for the AI-native era. Instead of asking AI to write disposable, risky code on the fly, the sustainable approach is to let humans define the business intent and machine manage the code generation. If a requirement exceeds the generator's current scope, you simply upgrade the generator. You never patch the output; you upgrade the machine.

Composability: Giving AI a Reliable Toolset

Once you have a deterministic core, you need a safe way for AI to interact with it. This brings us to composability.

By decoupling the logic from the screen via an API-first architecture, the modern SaaS platform is transformed into a set of reliable tools that any AI agent can use. An AI agent doesn't need to know how to execute a complex supply chain calculation. It just needs to know which API endpoint to call to get the result.

The AI acts as the orchestrator, and the API is the reliable toolset.

Constructing the Perfect Hybrid

This structure creates the perfect blend of a probabilistic (AI) and deterministic (system) workflow, optimizing both latency and cost. We don't use expensive, hallucination-prone AI to do the ‘boring’ work of database management. We let efficient, generated code handle that. We only use the expensive AI for reasoning when it is required.

5. Conclusion: The Probabilistic Brain on a Deterministic Spine.

SaaS isn't dying; it is shedding its skin.

The argument that ‘cheap code makes software worthless’ ignores the reality that successful software companies moved past selling ‘code’ years ago.

The construction of ‘software’ is becoming a commodity, which is great. It lowers costs and speeds up innovation. But the ‘service,’ where the domain expertise, architecture, and guarantee of reliability come from, is more valuable than ever.

We didn't know a decade ago that we were building for an agentic future. We simply believed that consistency, composability, and efficiency were the only ways to build at scale. It turns out, those principles are the exact requirements for an AI-native system: a probabilistic brain combined with a deterministic spine.

While the market worries about AI writing code, the winners will be the ones using AI to strengthen the service they sell, ready to plug into whatever interface the future holds.